NVIDIA GPU Boost 2.0 is the 2nd generation GPU clocking technique that converts available power headroom into increased GPU performance.

On February 19, 2013, NVIDIA introduced the GeForce GTX Titan as part of the GeForce GTX 700 series product line. The GTX Titan sports the full big Kepler GPU and is a significant step up compared to the GeForce GTX 680 in both price and performance. With GeForce GTX Titan, NVIDIA also introduced the GPU Boost 2.0 technology.

GPU Boost 2.0 is a small but significant improvement over the GPU Boost 1.0 technology as it shifts from a predominantly power-based boost algorithm to a predominantly temperature-based boost algorithm. Including temperature in the GPU Boost algorithm makes a lot of sense from performance exploitation and semiconductor reliability perspectives.

First, with GPU Boost 1.0, NVIDIA made conservative assumptions about temperatures. Consequently, the allowed boost voltages were based on worst-case temperature assumptions. So, as you’d get worst-case boost voltages and associated operating frequency, you’d also get worst-case performance.

Second, semiconductor reliability is strongly correlated with operating temperate and operating voltage. For a given frequency, you need a higher operating voltage to maintain reliability at a higher temperature. Alternatively, at a lower operating temperature, you can use a lower voltage and retain reliability for a given frequency.

With GPU Boost 2.0, NVIDIA better maps the relationship between voltage, temperature, and reliability. The algorithm aims to prevent a situation where high voltage is used in combination with a high operating temperature, resulting in lower reliability. That enables NVIDIA to allow for higher operating voltages both at stock and in the form of over-voltage options for enthusiasts.

The result is, according to NVIDIA, that GPU Boost 2.0 offers two layers of additional performance improvement over GPU Boost 1.0.

- First, a higher default operating voltage (Vrel) enables higher out-of-the-box frequencies at lower temperatures.

- Second, by allowing their ecosystem partners to extend the voltage range (Vmax) if the end-user is willing to trade in reliability

While it may seem that the over-voltage is a welcome return to manual overclocking, it is essential to note that NVIDIA still determines the voltage range between Vrel and Vmax. Board partners have the option to allow end-users to access this additional range but cannot customize either voltage limit.

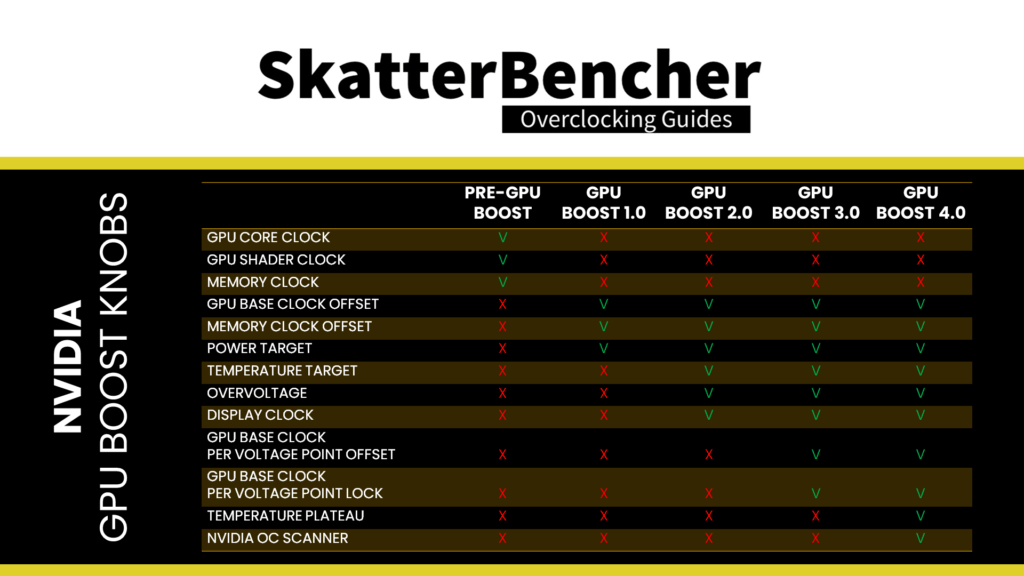

To summarize, GPU Boost 2.0 offers the following overclocking parameters:

- Power target

- Temperature target

- Overvoltage

- GPU Clock Offset

- Memory Clock Offset

- Display Clock

The Power Target is simplified from GPU Boost 1.0 as there is now only one power parameter: TDP

The temperature target is introduced with GPU Boost 2.0 and can either be linked to the power target or set independently. The default temperature target is different from the maximum temperature or TjMax, though users can change it. The temperature target determines the boost algorithm and the fan curve. A higher temperature target means you can boost to higher frequencies with lower fan speed.

Overvoltage enables or disables the predefined voltage range between Vrel and Vmax

The GPU Clock and Memory Clock Offset are the same as GPU Boost 1.0.

Oh, right, and GPU Boost 2.0 also offers the ability to overclock the Display Clock, which overclocks your monitor by forcing it to run at a higher refresh rate.