Update On My 3.1GHz Intel Arc A380

In this blog post, I update you on the progress I’ve made overclocking the Intel Arc A380 to 3.1 GHz with custom overclocking tools.

As some of you may know, I purchased one of the GUNNIR Photon Arc A380 graphics cards at the beginning of this month from JD.com. I hoped it would be a relatively quick SkatterBencher video to put out in anticipation of the next-generation CPU platforms coming later this year. Unfortunately, things were a bit more complicated than I had initially hoped. So, as you can see from my channel uploads, I’m not quite there yet.

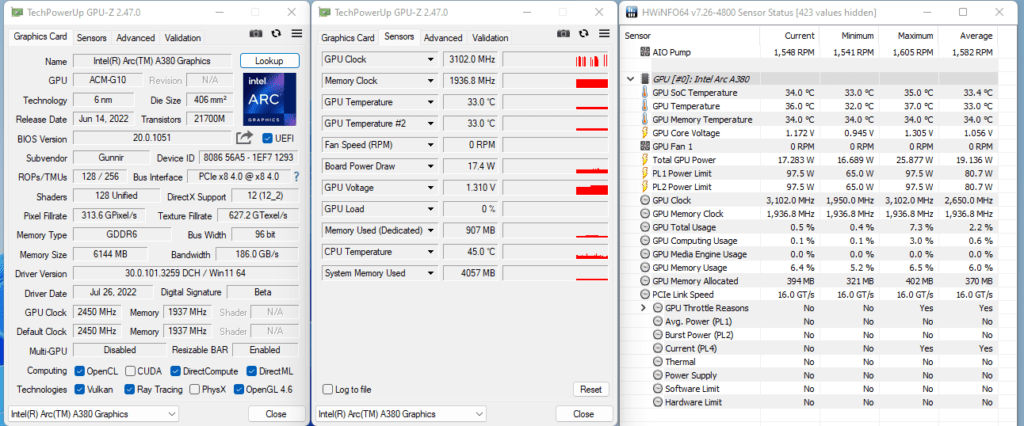

Two weeks ago, I teased the GPU clocked to 3.1 GHz on my Twitter. So, in this video, I want to give you a quick update on what challenges exist with these cards and how far I got working around the issues.

Arc Control Toolkit

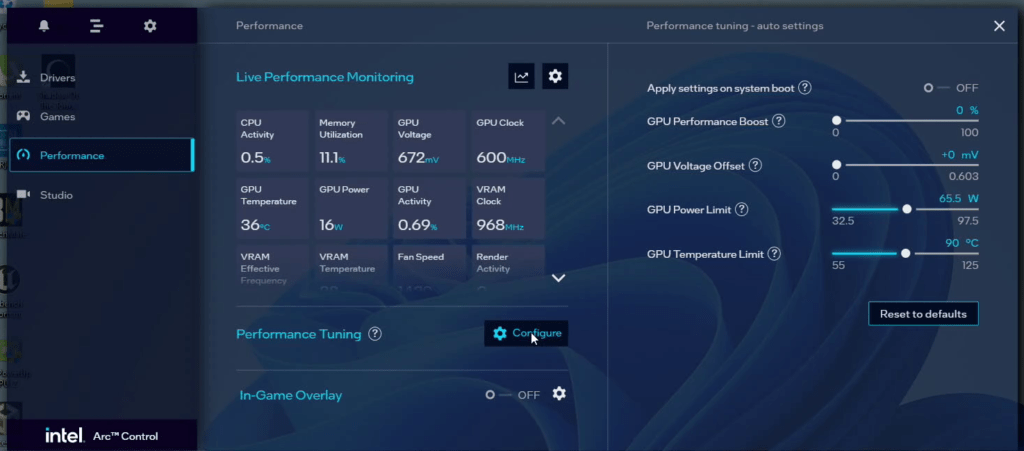

The first issue that most A380 owners are familiar with is the limited overclocking tools available in the driver software.

As TechPowerUp points out in their review of the Gunnir A380: while GPU overclocking is available within the Arc Control software package, it’s a reasonably limited implementation:

- First, GPU overclocking itself is in the form of a percentage overclock rather than the actual frequency. Furthermore, this percentage doesn’t really reflect the actual frequency increase. Setting +31% in the driver software results in a GPU frequency overclock of about 10% from 2450 MHz to 2696 MHz.

- Second, while a GPU voltage slider is available, it’s not doing anything.

- Third, there are no memory overclocking options available.

- Fourth, there is a working option available to adjust the power limit. But, again, the implementation isn’t very straightforward as it’s a GPU-only measurement instead of a total board power measurement.

In addition, there’s limited to zero information available from Intel on the GPU clocking or voltage topology. So, the first challenge is to determine how clocking and voltage work on this card.

In the next few minutes, I’ll review some of my findings and thoughts on the A380 topology. Please note that none of this is confirmed by Intel directly and, thus, may be wrong. So, take it for what it is.

Arc A380 Clocking Topology

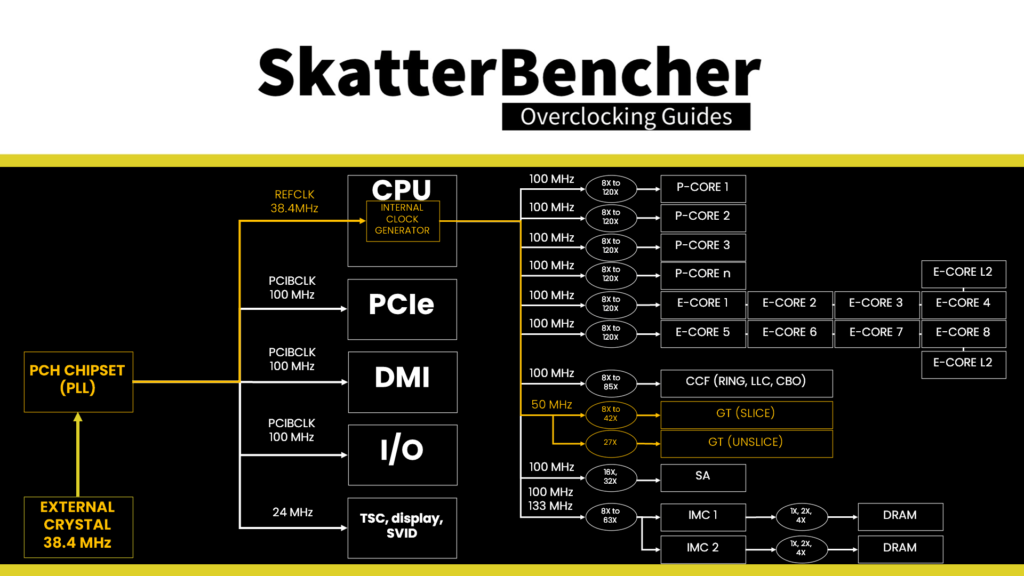

Intel has a lot of experience building graphics engines. You find an Intel GPU inside most of the desktop and mobile processors. At first thought, you’d assume that the discrete GPU clocking works the same way as on the integrated graphics. I’ve done a few Intel IGP overclocking videos in the past, so if you want more information on that, feel free to check out SkatterBencher #28 (UHD750), SkatterBencher #33 (UHD 770), or SkatterBencher #38 (UHD 730).

Long story short, the IGP frequency is derived from a 100 MHz base clock frequency, first divided by two and then multiplied by a slice ratio. For Alder Lake integrated graphics, the maximum slice ratio is 42. So, the maximum IGP frequency is 2.1 GHz before base clock adjustments. The IGP can overclock to almost 2.4 GHz with base clock frequency adjustments.

The discrete GPU clocking works … in a totally different way. In fact, from what I’ve gathered, it works kind of similar to NVIDIA GPUs.

With special software tools, you can extract from the driver a voltage-frequency curve. In the driver, we find 130 distinct V/F points defined, ranging from 300 MHz to 2450 MHz explicitly stated. That said, from testing, it appears the curve extends beyond 2450 MHz. Each V/F point has a frequency and voltage associated with it. The GPU can select the optimal V/F point depending on the current situation.

The GPU supports two types of overclocking: offset mode and lock mode.

In offset mode, you can offset the factory-fused voltage-frequency curve by a specific frequency or voltage. The behavior is similar to NVIDIA graphics cards in that increasing the voltage offset also increases the maximum operating frequency.

On NVIDIA graphics cards, the reliability voltage, Vrel, defines the maximum warranted voltage. The maximum voltage, Vmax, is the highest voltage that AIB partners can enable on their products. On NVIDIA cards, a percentage slider enables the range beyond Vrel up to Vmax.

Similarly, on the A380, increasing the offset moves you up along the factory-fused voltage-frequency curve. It gives you access to V/F points with higher voltage and frequency.

In lock mode, you can override the highest available V/F point within the warranted range. In this case, 2450 MHz, with a manual voltage and frequency.

With the help of Shamino, and after many troubleshooting attempts, we built a custom tool that allowed us to try out both methods. For the time being, I won’t share the tool publicly yet for two reasons:

- I’m not sure to what extent the tools contain proprietary information, so I want to be on the safe side

- The tool is in a very rough implementation stage, and you must be extremely careful setting voltage and frequencies. Otherwise, you risk running almost 2V through the GPU, as I did at some point.

I can be very brief on the memory overclocking front: it is simply not supported. At least, not according to the information we can extract via the driver where it states explicitly that memory overclocking is not supported.

Arc A380 Power Limits

With the overclocking tool problem solved, one would expect the rest goes smoothly. Just push it to the max. Well, not so quick. While the tool we built provides us with the frequency and voltage control we need to push the card to the limit, there are still some limitations.

The first limitation is the power limit. The power limit definition for the Intel discrete GPUs is very similar to the architecture on the CPU side. There’s a PL1, PL2, and PL4.

PL1 is the long-term sustained power limit, PL2 is the short-term burst power limit, and PL4 is the peak power limit. These three power definitions have associated throttle mechanisms that trigger when the limit is surpassed.

The GPU has other throttle mechanisms related to temperature and power supply stability, but these are not relevant now.

PL1 and PL2 are set to 65W by default and can be increased to 97.5W via the driver interface. With specialized tools, you can increase these values to well over 250W, if needed.

PL4 is set to 800W by default and cannot be increased, even with specialized tools. Unfortunately, it’s the PL4 limit that is the blocking limit that’s preventing me from finishing the SkatterBencher video.

You can find the PL4 limit throttle flag in HWiNFO. When the PL4 limit is triggered, the GPU driver will automatically reduce the operating frequency. So, even when I have the GPU clocked at 3.1 GHz, because the PL4 limit is triggered, the actual frequency during a 3D load is throttled to about 2.3 GHz.

I’ve tried many things to resolve the PL4 limit, but unfortunately, I’ve yet to come up with a viable solution. To give you some ideas about the thought process, here’s how I’ve approached the problem.

Arc A380 PL4 Challenge

We actually know a lot about the Intel power limits from the CPU side of their business. Therefore, we know that PL4 is the ultimate safeguard against power spikes and is a limit that shall not be exceeded. What we don’t know is how it is implemented on discrete GPUs.

There are several options:

- Maybe the power limit is implemented like on high-end NVIDIA graphics cards. Here, a separate IC reports the 12V input power to the GPU by measuring the input voltage and voltage drop over a shunt resistor. In this case, we should be able to work around the power limitation by shunt-modding

- Alternatively, it may work similarly to AMD graphics cards. Here, the VRM controller reports electrical information such as measured voltage and current back to the GPU via the SVI2 interface. In this case, we should be able to work around the issue by disabling this voltage controller function. For more information on this, you can check SkatterBencher #41with the RX 6500 XT

- Another alternative is that the power limit is implemented like on low-end NVIDIA graphics cards. Here, the power is an internal estimated based on the GPU VID request to the voltage controller and an internal current estimate based on actual GPU load. In this case, we should be able to work around the power limitation by forcing the GPU to use a low VID and manually increasing the output voltage. For more information on this, you can check out SkatterBencher #40 with the GeForce GT 1030.

The first option can be evaluated by checking the physical PCB for any shunt resistors or additional ICs. There is none present. That makes sense because it would add substantial cost to the PCB.

The second option can be evaluated by checking the voltage controller datasheet. The GPU voltage controller on the Gunner A380 is the Monolithic Power Systems MP2940A. Unfortunately, the datasheet is not publicly available. However, since the controller is widely used on Intel motherboards by various motherboard vendors, I got my hands on the necessary details. Since the voltage controller supports a PMBus interface, we can talk to the controller using the ElmorLabs EVC2 device. A special thanks goes out to Elmorlabs Discord user Whiteshark for helping me set up the device support.

To make a long story short, it was relatively straightforward to set up the PMBus communication and get the voltage controller functions to work. While the controller definitely supports functions to feedback information, it doesn’t seem like there’s any information relayed from the controller back to the GPU. So, the second option is also off the table.

As it would take quite a lot of time to go through how the third option works on low-end NVIDIA graphics cards, I will refer you to SkatterBencher #40, where I took the time to explain it in detail. The long story short is that we cut the communication line between the GPU and the voltage controller. As a result, we can force the GPU to boot at a low voltage (usually around 0.6V) and manually adjust the output voltage via the voltage controller by manipulating the feedback circuit.

And here’s where my progress grinds to a halt as I’ve simply been unable to work out how to make this work. There are two parts of this challenge that needs to be addressed:

- Can the GPU boot up if the SVID connection with the voltage controller is cut? If yes, what is that boot voltage? And

- Can I modify the voltage controller to gain manual control over the voltage output without triggering electrical protections?

I currently have no answers for either question, so, as I said, my progress has ground to a halt.

Next Steps

So, what are the next steps? Well, there’s still a couple.

Ideally, I would find a way to override the PL4 limit using a software tool. Some tools can override PL4, but I only have access to tools that can only lower the limit for now.

If that doesn’t work, I can continue to work on the voltage hardware modification method. However, I will need to look for expert assistance to make progress.

A far-out alternative is VBIOS modding. While I did manage to make a dump of the BIOS, I have minimal experience with this. Also, the BIOS would likely need a valid digital signature, just like with NVIDIA and AMD graphics cards.

Suppose, somehow, I manage to get around the PL4 throttling problem. In that case, the next step will undoubtedly be to find a solution for the overheating 2-phase VRM. Quick tests showed me that the VRM hits over 90 degrees in 3D load even with low voltages. One option is to get ahold of the Bykski full-cover water block for this graphics card and liquid cool the VRM.

So, as you can see, I’m not yet out of options, but I’m not expecting to sort out this SkatterBencher content any time soon.

Anyway, that’s all for today! If I somehow get it working, I’ll be sure to put out a video providing more details. If not, the next video I’ll put up will probably be a CPU overclocking guide.

I’d like to thank my Patreon supporters for supporting my work.

As per usual, if you have any questions or comments, feel free to drop them in the comment section below.

See you next time!

Julian

Hello Pieter! Can you share the bios .bin file please?

Pieter

Here’s the download link: https://skatterbencher.com/wp-content/uploads/stuff/a380_bios

avi

pieter could you pls do some about my

GIGABYTE Arc A310 WINDFORCE OC

GV-IA310WF2-4GD

its as rated as 50w but its stuck at 43w and its not even getting hot i dont get any raw performance its been hard lately so pls

Marco Ricci

In my post below I meant to wright Sparkle (Arc A380 ELF 6GB).

Marco

Hi Pieter,

I’ve played with my A380 (Wirkle, one fan only) and I’ve been able to push it up from its 1800 to 2450MHz thanks to the indications/tools you provided (thanks a lot by the way). It works better in Fulmark at that frequency with 0,8v in Lock (59w and temperature around 63 degrees). While it still has a thermal margin to go the GPU does not accept frequency inputs higher than 2450. The voltage doesn’t rather seem to be limited.

I would like to try it at 2900 as in your experiment or at least at 1750. Any idea how to override that hard limit? Have a great new year. marco

Pieter

Thanks for the kind words!

If I understand you correctly, you’re saying that it’s not possible to set the OC Lock frequency over 2450 MHz anymore?

SkatterBencher #65: Intel Core i9-11980HK Overclocked to 5200 MHz - SkatterBencher

[…] is the same PL4 limit that I discussed when we overclocked the Intel Arc A380 graphics […]

SkatterBencher #44: Intel Arc A380 Overclocked to 2900 MHz - SkatterBencher

[…] As you can see from the title, this is SkatterBencher #44 and not the successor of SkatterBencher #56. I was supposed to finish the project sometime in August last year. But due to the many challenges with Arc overclocking, I published a blog post titled “Update on my 3.1GHz Intel Arc A380.” […]

Alex - Freakezoit

Hey Peter , i have done a lot of tests with my A770 which behaves the same as your A380.

After fiddling around a while with all posible settings (OC lock voltage and offset voltage) i found the issue you mentioned but it is different from what you thought it is.

1. it is not PL4 that caused the downclock i tested mine up to 450W gpu only load… and Pl4 is triggered but it doesn`t lower the clock .

2. It seems to be an Voltage – Clock comb. limit , it can go up to 1.207v before it lowers the clock if you set the voltage higher than that it lowers the clock under load , for mine @ around 1.25 -26v clock goes down to 2350mhz @ load . If you lower that voltage it will go up again.

Pl4 was never an issue for me even under 3d load for hours @ 300W+ .

It seems that with offset max idle voltage is 1.212v , it can`t go higher than that.

Example if you set clock offset to 120 and voltage offset to 125 , it will be allready maxed out ,

at voltage offset 135 it wil be 1187v and @ 160 it will be @ 1.212v and so on.

So for me it seem like Gpu Boost only works up to Vmax 1.212v above that it goes down until 2350mhz (boost off).

That is what i found out.

Regards

Freakezoit@ Hwbot

Pieter

Thanks for sharing your insights! It’s quite a coincidence because I recently revisited the A380 to finish the SkatterBencher project I started last year.

My findings are the same to yours: beyond 1.17V the GPU forcibly reduces the frequency. Every 10mV increase is a 50MHz decrease. I’m not sure if that was the same with the launch drivers or the behavior has changed with newer drivers.

I hope to publish my video in the next two weeks.

Homebrew Intel Arc OC Tool Released by Legendary Overclocker - Viral Works Tech News Blog

[…] “IMPORTANT! Be cautious when setting the voltage! On my A380 I’ve to set 1.00000 for the default voltage, then 0.99999 for 10mV much less and 1.00001 for 10mV extra. If you’re not cautious, it’s doable to set >2V as I demonstrated before.” […]

Homebrew Intel Arc OC Tool Released by Legendary Overclocker - Apkmcn

[…] “IMPORTANT! Be cautious when setting the voltage! On my A380 I’ve to set 1.00000 for the default voltage, then 0.99999 for 10mV much less and 1.00001 for 10mV extra. If you’re not cautious, it’s potential to set >2V as I demonstrated earlier than.” […]

Efsanevi Hız Aşırtma Uzmanı Tarafından Yayınlanan Homebrew Intel Arc OC Aracı - Dünyadan Güncel Teknoloji Haberleri | Teknomers

[…] “ÖNEMLİ! Voltajı ayarlarken dikkatli olun! A380’imde varsayılan voltaj için 1.00000, ardından 10mV daha az için 0.99999 ve 10mV daha fazla için 1.00001 ayarlamam gerekiyor. Dikkatli değilseniz, >2V olarak ayarlamak mümkündür. daha önce gösterdim” […]

Homebrew Intel Arc OC Tool Released by Legendary Overclocker - Softs Geek

[…] “IMPORTANT! Be careful when setting the voltage! On my A380 I have to set 1.00000 for the default voltage, then 0.99999 for 10mV less and 1.00001 for 10mV more. If you’re not careful, it’s possible to set >2V as I demonstrated before.” […]

2023 - Homebrew Intel Arc OC-Tool, veröffentlicht von Legendary Overclocker

[…] “WICHTIG! Vorsicht beim Einstellen der Spannung! Bei meinem A380 muss ich 1.00000 für die Standardspannung einstellen, dann 0.99999 für 10mV weniger und 1.00001 für 10mV mehr. Wenn Sie nicht aufpassen, können Sie >2V als einstellen Ich habe vorhin demonstriert.“ […]

Homebrew Intel Arc OC Tool Released by Legendary Overclocker – DigitalExact

[…] “IMPORTANT! Watch out when setting the voltage! On my A380 I’ve to set 1.00000 for the default voltage, then 0.99999 for 10mV much less and 1.00001 for 10mV extra. When you’re not cautious, it’s attainable to set >2V as I demonstrated before.” […]

Homebrew Intel Arc OC Tool Released by Legendary Overclocker – 1 World Solutions Technology News & Info

[…] “IMPORTANT! Be careful when setting the voltage! On my A380 I have to set 1.00000 for the default voltage, then 0.99999 for 10mV less and 1.00001 for 10mV more. If you’re not careful, it’s possible to set >2V as I demonstrated before.” […]

El legendario experto en overclocking lanza la herramienta Intel Arc OC de fabricación propia - La Guia del Inge

[…] “¡importante! ¡Tenga cuidado al configurar el voltaje! En mi A380, tuve que configurar el voltaje predeterminado en 1,00000, luego 0,99999 para que sea 10 mV por debajo y 1,00001 para que sea 10 mV por encima.Si no tiene cuidado, puede configurar >2V para he demostrado antes“ […]

Homebrew Intel Arc OC Tool Released by Legendary Overclocker – Infowire

[…] “important! Be careful with voltage settings! On my A380 it should be set to 1.00000 for the default voltage, then 0.99999 for less than 10mV and 1.00001 for more than 10mV. If you are not careful, it is possible to set >2V to previously demonstrated.” […]

Homebrew Intel Arc OC Tool Released by Legendary Overclocker - News in Seconds

[…] “IMPORTANT! Be careful when setting the voltage! On my A380 I have to set 1.00000 for the default voltage, then 0.99999 for 10mV less and 1.00001 for 10mV more. If you’re not careful, it’s possible to set >2V as I demonstrated before.” […]